- Cover

- 12 de May de 2026

- No Comment

- 8 minutes read

AI in technical education: the risk of cognitive offloading and tempo as a matter of professional ethics

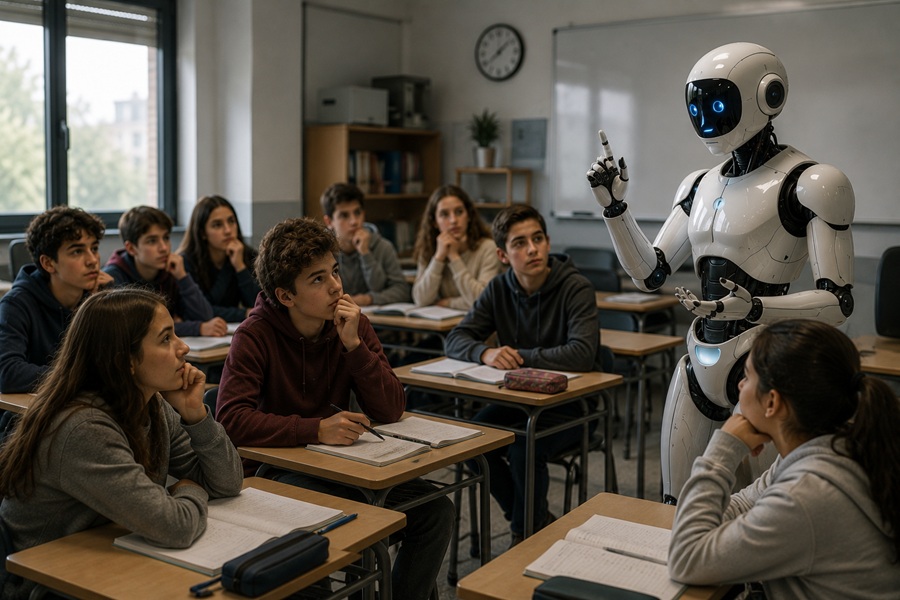

Image created using AI.

Those of us working in education have been debating for some time whether artificial intelligence (AI) should be brought into the classroom. But in technical and vocational education—where we train future professionals for technological fields—the real question may not be whether, but when. In a climate shaped by institutional and media pressure to innovate, and by an increasingly competitive labour market, that distinction matters.

As I have argued elsewhere, no educational technology should be an end in itself. Except in those subjects—or specific parts of the curriculum—where technology is itself the object of study (as in some of our degree and master’s programmes), it is simply a means. Introduced too early, before students have acquired the necessary conceptual foundations, it becomes counterproductive: it breeds dependency, encourages superficial learning, and creates a misleading sense of understanding. The question, then, is not “Should my students use AI?”, but “Have they learned enough to use it critically?”

We readily accept that giving a calculator to a primary pupil who cannot yet multiply undermines both their learning and their mental arithmetic. The same applies to students learning the basics of programming: using AI as a shortcut ultimately holds them back. Tempo—the right moment of introduction—is the first professional and ethical criterion for any teacher seeking to use AI responsibly.

Cognitive offloading in the age of generative AI

This is not a hypothetical concern. The evidence is clear: when students use generative AI as a shortcut, they learn less—or not at all. The phenomenon is known as cognitive offloading (Risko & Gilbert, 2016): the outsourcing of mental effort to an external system. Retrieval, synthesis, reasoning, planning, creativity and critical judgement—tasks that should be internalised—are delegated to the machine. In the short term, performance may even improve; over time, however, higher-order thinking—of the kind mapped in Bloom’s taxonomy—begins to erode (Gerlich, 2025).

Generative AI offers fluency on demand. And that is precisely the problem. The most demanding tasks—the desirable difficulties identified in educational research—are those that consolidate learning, particularly in higher education. When students sidestep them, they may still secure good marks, but they do not develop real competence. There is already evidence that sustained, unregulated use of AI leads to weaker memory retention and poorer reasoning (Lodge & Loble, 2026). Fan et al. (2025) go further, describing a form of metacognitive laziness: the gradual erosion of the self-regulation on which deep learning depends.

The challenge for education is therefore twofold: to prevent AI from becoming a permanent cognitive crutch, while fostering a classroom culture in which students can be open about how they use it. Honest self-assessment—Have I really learned this? Do I understand it well enough to reproduce it without assistance?—is itself a skill that must be explicitly taught, particularly in technical education such as that offered at the UPC.

A professional code of ethics for teachers: technology as a professional responsibility

Many teachers are unaware that a professional code of ethics exists to guide their practice. In 2021, the Col·legi Oficial de Doctors i Llicenciats en Filosofia i Lletres i en Ciències de Catalunya approved such a code, explicitly committing teachers to the responsible and ethical use of technology (Hernández-Fernández, 2025). This is not a minor point. It recognises that every technological choice made in the classroom shapes not only the professionals we train, but also broader concerns such as citizenship, privacy, personal autonomy and educational equity.

In practice, this commitment entails concrete responsibilities: keeping one’s digital competences up to date; understanding data protection frameworks; preventing plagiarism; recognising algorithmic bias and the corporate interests embedded in the tools we recommend; and safeguarding the confidentiality of academic information. Above all, it demands something more fundamental: ensuring that technology serves learning, rather than the other way round.

This, in turn, requires a careful rethink of the tasks we set. Can they be completed at the click of a button? If so, what are they really assessing? What kinds of activities genuinely promote learning under present conditions?

The use of AI in education is neither optional nor confined to technical disciplines. It cuts across the whole curriculum. There may still be exceptions—but only for now. And yet, deontological reflection remains largely absent: from initial teacher education, from continuing professional development, from legislation—and, perhaps most worryingly, from the everyday thinking of many teachers.

Conclusion

What is needed is a clear framework: tempo as a pedagogical principle, and professional ethics as a guide to practice. We must weigh not only what AI brings to the classroom, but also what it may take away—including the risks associated with cognitive offloading.

Paradoxically, this means resisting the temptation to use AI in a casual or purely fashionable way. Its environmental and educational costs are real. Instead, it should be integrated thoughtfully, pragmatically and critically—aligned with the needs of each discipline and with the realities of professional practice, both present and future.

It also means educating students about its broader implications: environmental, social, techno-ethical and cognitive. Above all, it means creating the conditions for genuine learning—learning that requires effort. In this context, the machine should function as support, reinforcement and enhancement—not as a substitute for thinking, nor as a shortcut to passing.

Critical thinking in teaching begins with a willingness to question the unreflective adoption of new technologies. We would do well to apply to AI the precautionary principle set out in the Barcelona Declaration (Steels & López de Mántaras, 2018). In education, it is often better to arrive later—with evidence—than to be first without it.

References:

Fan, Y., Tang, L., Le, H., et al. (2025). Beware of metacognitive laziness: Effects of generative artificial intelligence on learning motivation, processes, and performance. British Journal of Educational Technology, 56(2), 489–530. https://doi.org/10.1111/bjet.13544

Gerlich, M. (2025). AI tools in society: Impacts on cognitive offloading and the future of critical thinking. Societies, 15(1), 1–28. https://doi.org/10.3390/soc15010006

Hernández-Fernández, A. (January 2026). When to use technology in the classroom. Educational Evidence. https://educationalevidence.com/en/when-to-use-technology-in-the-classroom/

Hernández-Fernández, A. (2025). Tecnologia, tecnoètica i codi deontològic docent. Revista de tecnologia», 13, p. 56-59. http://hdl.handle.net/2117/442517

Lodge, J. M., & Loble, L. (2026). Artificial intelligence, cognitive offloading and implications for education. Network for Quality Digital Education / University of Technology Sydney. https://www.uts.edu.au/news/2026/03/experts-warn-unstructured-ai-use-in-schools-risks-cognitive-atrophy

Risko, E. F., & Gilbert, S. J. (2016). Cognitive offloading. Trends in Cognitive Sciences, 20(9), 676–688. https://doi.org/10.1016/j.tics.2016.07.002

Steels, L., & López de Mantaras, R. (2018). The Barcelona declaration for the proper development and usage of artificial intelligence in Europe. AI communications, 31(6), 485-494. http://doi.org/10.3233/aic-180607

Source: educational EVIDENCE

Rights: Creative Commons